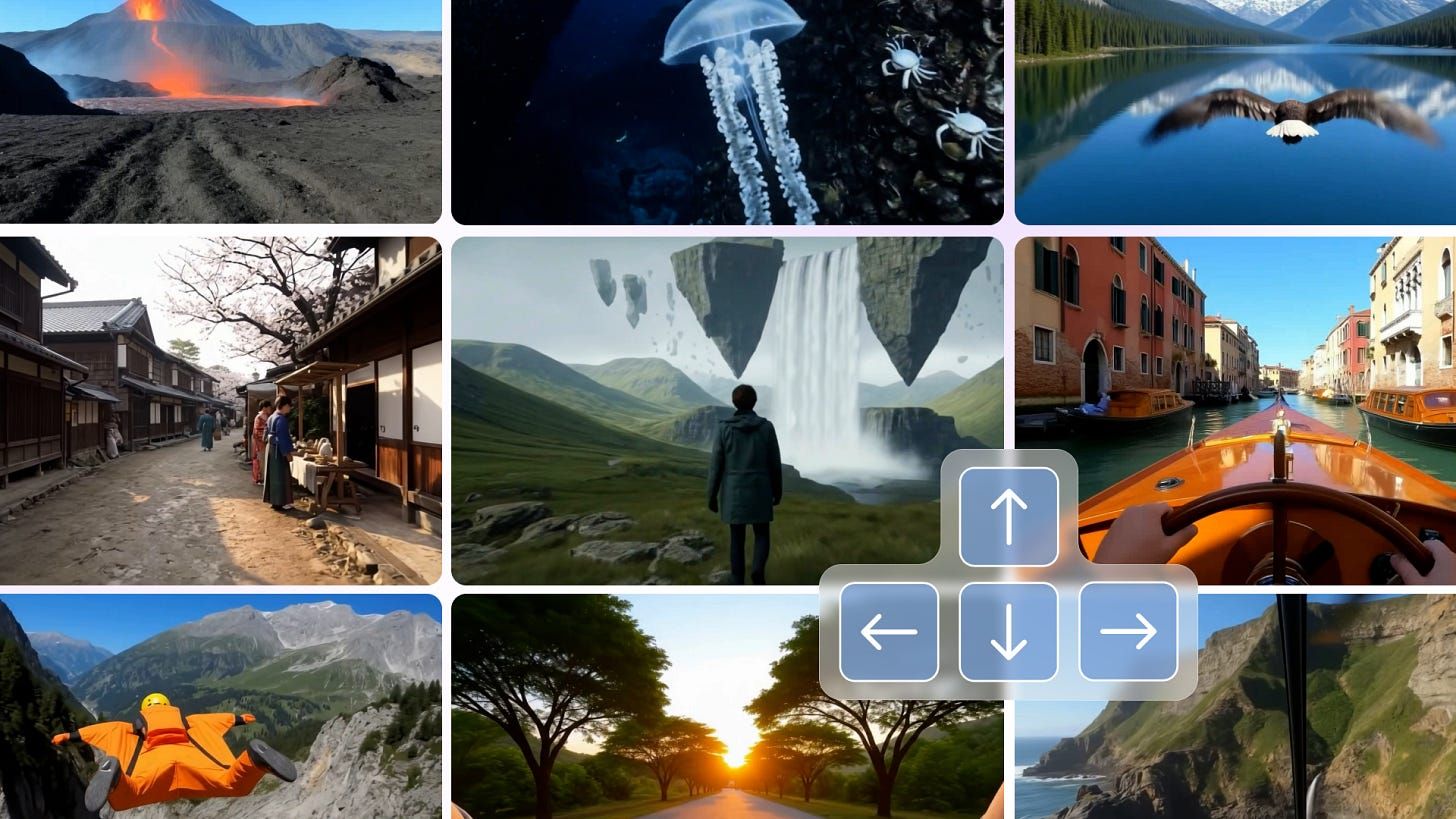

Google DeepMind just gave us a glimpse of an AI future where you can play inside your prompt minutes-long, 720 p, physics-aware 3D worlds spun up in real time. If that sounds like gamer candy, think again: Genie 3 could rewrite how we prototype, test and even sell digital products this quarter.

Data snapshot

3 × longer interaction – Genie 3 supports “a few minutes” of continuous play versus the 10–20 seconds limit of Genie 2.

720 p at 24 fps – first world model to hit HD-like resolution in real time on consumer hardware.

$109 b US private AI investment (2024) – up 12 × over China, fuelling rapid adoption of simulation-ready models.

Three key insights

World models move from research to revenueControlled previews hint at near-term enterprise pilots think robotics vendors paying per simulated hour instead of building costly physical testbeds.

Promptable events unlock dynamic marketing assetsGenie 3 lets you change weather, characters or signage on the fly. Imagine A/B-testing in-app environments or ad creatives inside a live 3D scene, not a static screenshot.

Simulation memory is the real differentiatorMaintaining object state for ~60 seconds makes user journeys measurable. That opens the door to analytics hooks heat maps, funnel drops, eye-tracking proxies inside a fully generated space.

My take

I’ve spent the past year helping non-technical teams ship mobile apps with AI-assisted builders. The biggest bottleneck is still validation: How do you know a new layout, onboarding flow or store design will convert before you burn sprint cycles? Genie 3 hints at a solution.

Picture this: feed your product schema into a world model, teleport a cohort of test users (or agents) inside, and watch heat maps form in minutes. No Unity build, no TestFlight push. Marketers could iterate paywall copy in real time; PMs could observe retention-critical moments without instrumenting code.

Yes, the model is behind a velvet rope today. But DeepMind’s playbook mirrors every generative AI rollout we have seen: research preview → wait-list API → ecosystem of wrappers and vertical tools. By the time Genie 3 (or its open-source cousin) hits public beta, teams that already speak “simulation-native” will sprint ahead. I plan to start mapping analytics events to spatial coordinates now because when the gates open, experimentation speed will decide winners.

Action step

Set up a 90-day “sim-readiness” sprint: audit your current UX metrics, define which moments would benefit from immersive testing, and allocate one design sprint to prototype a lightweight 3D paywall or onboarding flow in an existing engine (Unity, Three.js). You’ll be ready to plug into Genie-style world models as soon as access lands.

Further reading

Google outlines latest step towards creating AGI with Genie 3 – The Guardian theguardian.com

Google’s new AI model creates video game worlds in real time – The Verge The Verge

2025 AI Index Report – Stanford HAI (investment & adoption stats) hai.stanford.edu